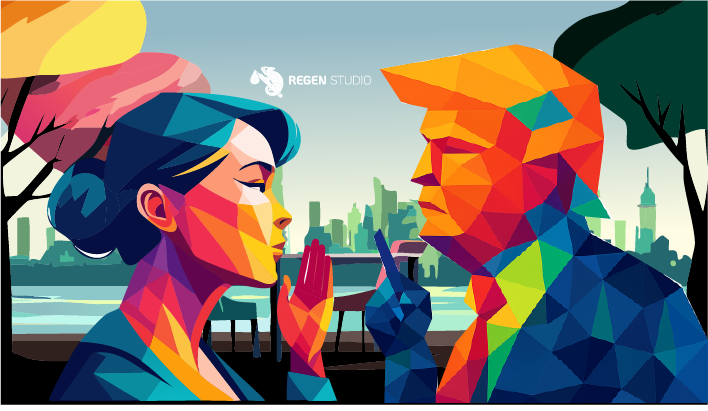

Having a conversation with ChatGPT is the same as whispering it in Donald Trump’s ear. A personal story about AI, privacy, and why we need mental models for digital self-defense — co-written with ChatGPT.

Until recently, I thought I had a solid handle on digital self-defense. But something shifted.

Some days ago, my wife and I found ourselves in a conversation with ChatGPT taking the role as a relationship therapist. We shared real emotions and intimate thoughts.

It played the role of independent third person perfectly and helped us figure out, without a fight, that we both just needed to be heard…

But who are we really talking to? We are giving OpenAI information meant to help us, but in the wrong hands can also be used to drive a wedge between us. Both existing and new developments in the U.S. give serious rise to concerns around our privacy.

This urgency inspired a mental model I now use every day.

“Having a conversation with ChatGPT is the same as whispering it in Donald Trump’s ear.”

Ironically, this post was co-written with the help of ChatGPT. [read the conversation →] I figured it can put me in danger for future visa requests to the U.S., but I have no desire going anyway. After the Dutch King Willem-Alexander let Trump sleep in his palace, the Dutch seem out of the crosshairs. For now.

So I am taking the risk! Let’s unpack why this matters, and how you can protect yourself — and your community — from the invisible extractive forces behind the screen.

Risks of Legal Jurisdictions

Even when a government can be expected to follow the law, that law can represent serious risks.

The majority of LLMs in use today are owned, operated, and governed by U.S. corporations that have to comply with laws like the CLOUD Act, which gives the government the power to request any data on servers of U.S. companies — even if not located in the U.S. Even ‘ethical AI models’ like U.S.-based Claude run that risk.

However, since last month our privacy is threatened from another unexpected corner. A lawsuit filed by the New York Times against OpenAI for copyright infringement. [→]

In this lawsuit, the court decided in favor of the New York Times: OpenAI should retain all user and API data indefinitely — something that OpenAI rightfully says would seriously contradict their privacy commitments. [→] Combined with the CLOUD Act, this could prove disastrous for all users of U.S.-based AI models.

Although OpenAI is not yet complying with this court order, it might be a good idea to remove your data while you can.

Digital Profiling in the Age of AI

The pieces of our scattered digital selves have long been scraped from the web and clustered into profiles sold to the highest data-hungry bidder.

These profile sales have the goal to influence our behavior. [→] Not just what we buy, but how we think. We’re not just talking about individual risks, but collective risks too.

Use of such profiles has fuelled extremism and sometimes violence against institutions and minority communities. The Cambridge Analytica scandal famously showed how Russia used the power of profiles to influence U.S. elections. [→]

And that was before we started using ChatGPT as a relationship therapist.

ChatGPT and other AI models currently pose an even bigger risk to our digital — and therefore physical — safety, because of how detailed such profiles can now get, and how likely the response of ChatGPT will be taken for truth.

Who knows what we have given away already? These companies are getting never-seen-before access to information about our work, our home, and our thoughts. Such power in the wrong hands can wreak havoc.

What could happen:

- Data of users worldwide is subject to jurisdictions or governments with the power to obtain your data.

- Users risk consequences of this data being used against them.

- Training datasets and model decisions are not verifiable or auditable by the public. They risk being censured by vested interests or having inherit biases.

- Individuals could even potentially be targeted for specific behavior changes through AI conversations, since the nudging of thoughts can be done more subtly than ever.

We can expect wide-scale collective and individual behavior change campaigns the likes we have not seen before.

While the U.S. president is holding the world with a knife to its spine, he has strengthened relationships with these companies and even chose a Vice President to represent their interests. [→]

AI as a Risk to Our Safety

Today Trump arrived in The Hague for the NATO summit — the city where Regen Studio was founded. The former Dutch Prime Minister, now Secretary-General of NATO, Mark Rutte, openly praised Trump [→] for getting the 5% defense spending of NATO countries approved, and called the Iran strikes ‘truly extraordinary’ — while many experts and even Macron [→] claim it is against international law.

NATO allies are supposed to keep each other safe. [→] However, a lack of digital safety also puts us at physical risk, as the rise in polarization and violence through digital profiling has shown us.

Rutte seems convinced that the Americans are our only shot at safety. But would he share his most intimate details with Trump? Rutte might not — but every ChatGPT user is essentially doing that. Let alone the potential leaks of sensitive information due to employees sharing confidential documents. Anywhere we see reliance on models like ChatGPT, we’ve got a problem: a strategic dependency with no democratic oversight. We’re talking about collective digital and physical sovereignty.

No privacy, no peace. [→]

For the U.S. it might already be too late. With Elon Musk’s Grok now suspected of training on government data feared to include personal information, [→] the risks are escalating. Other countries with power ambitions are following quickly — DeepSeek is now being accused of sending sensitive data to the Chinese government. [→]

In that sense, Rutte legitimizing Trump’s behavior without criticism is arguably making allies less safe, if they do not stop the flow of their data to places where it might be used against them.

Even in the making of this blog, ChatGPT prevented me from generating certain critical or satirical images of Trump — though upon further testing it also didn’t allow similar images of the Ayatollah of Iran or Britney Spears. It shows: we are playing by OpenAI’s rules, which Trump will want to influence one way or another.

Luckily, AI models are being built with privacy, transparency, and regional control in mind — such as GPT-NL by TNO in the Netherlands, or Chat Maritaca by researchers from Unicamp in Brazil. Using such tools is one way to practice digital self-defense in AI conversations.

Digital Self-Defense with Mental Models — Pretend ChatGPT is Trump

It sounds ridiculous, I know. But stick with me — this is a practical strategy for anyone who values privacy, dignity, or democratic autonomy in the age of artificial intelligence.

Here’s the idea: imagine that everything you type into ChatGPT is going straight to someone like Donald Trump — or more precisely, to a system that could be accessed or exploited by actors with similar disregard for your privacy or consent.

This isn’t just a metaphor. This “Trump Test” is one of several mental models for protecting your privacy in conversational AI. Others include:

- The Visa Test — Would you say this during a border screening?

- The Newspaper Test — Would you want this published with your name?

- The Blackmail Test — Could this info be used to manipulate you?

A Strategy for Digital Self-Defense

Apart from the ‘Trump Test’ and its variations, there are other things you can do to protect yourself. Here is a quick overview:

- ‘Never say I to an AI’ — Practice conversational hygiene: depersonalizing, abstracting, fictionalizing. It helps maintain a boundary between you and the algorithm.

- ‘Find out who governs’ — Choose transparent and ethical organisations. Opt for open-source models trained on verifiable datasets.

- ‘Keep it on your device’ — Invest in locally run alternatives. Check out Ollama and run it in your console, or try Msty if you want more models or a smoother user interface.

From Generative to Regenerative AI

At Regen Studio, we believe in the possibility of regenerative AI — technology that:

- Helps people grow, learn, and connect.

- Strengthens communities and nature, rather than extracts from them.

- Cannot be used against us.

That last point is crucial. I am not talking about some ‘Skynet’ AI running wild, but humans using AI against other humans.

Because if we don’t define regenerative AI intentionally, it will become extractive by default. We’ve seen this before.

Closing Thoughts

Social media promised us global connection. What we got was manipulation, polarization, and mass surveillance. Let’s not take that same path with AI.

Instead, we must ask:

- Where does my data go?

- Who controls the model?

- Am I putting myself at risk?

Without that, AI will be weaponized — not democratized.

You don’t need to stop using ChatGPT. I haven’t. But you do need to stop talking to it like a trusted friend or your therapist.

Privacy isn’t about paranoia. It’s about preserving the space where your thoughts belong to you.

If you’re building AI tools that deserve to be called regenerative — let’s connect at regenstudio.world.

Want to dive deeper? You can read the full ChatGPT conversation used in creating this blog, with a surprisingly introspective twist at the end.

“I consider myself part of the ‘lost generation’ on the internet. The majority of my data on the worldwide web is completely outside of my control. A digital society with control back in the hands of people themselves is possible — and at Regen Studio I am trying to help create it.”

— Yvo Hunink, Founder Regen Studio · yvo.hunink@regenstudio.world